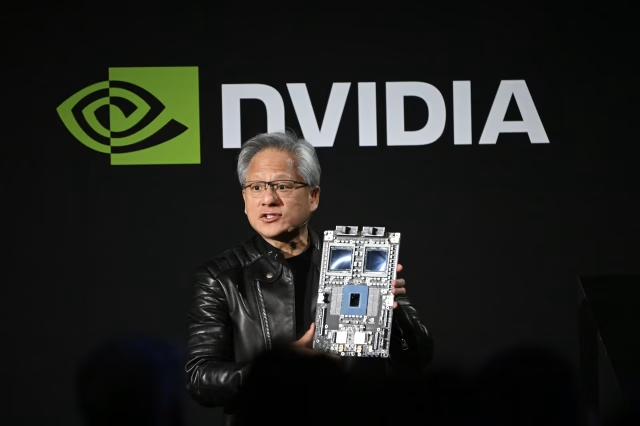

Nvidia CEO Jensen Huang has traditionally used the company’s annual developers conference in California to showcase the latest processors that power the global artificial intelligence boom.

While Huang is still expected to highlight Nvidia’s flagship graphics processing units (GPUs) during his speech on Monday, investors are increasingly focused on another key development in the AI industry: AI inference.

AI Inference Emerging as Key Topic for Nvidia

Nvidia’s chips play a central role in the powerful data centers that run modern artificial intelligence models. These processors have allowed the company to benefit from massive investments by major technology firms building AI infrastructure.

However, competition in the AI chip market is rapidly intensifying. Nvidia now faces growing pressure not only from semiconductor rivals such as Advanced Micro Devices and Intel, but also from large technology companies developing their own specialized AI processors.

For example, Alphabet has been working on custom chips designed to support artificial intelligence workloads within its data centers.

Shift From AI Training to AI Inference

A major shift is also occurring within the AI industry itself. Many companies are moving their focus from training AI models, where Nvidia’s GPUs dominate, toward AI inference — the process where trained AI systems perform real-world tasks for users.

Inference models often operate on different types of processors than traditional GPUs. In addition, some of Nvidia’s largest customers, including OpenAI and Meta Platforms, have indicated they may develop their own chips optimized for inference workloads.

This transition represents a critical challenge for Nvidia as AI systems evolve from experimental models to practical digital agents capable of performing tasks for businesses and consumers.

Nvidia Investing Heavily in AI Inference Technology

As a result, investors are likely to look closely at Huang’s remarks for insight into how Nvidia plans to expand its position in the growing inference AI market.

Analysts expect Nvidia to unveil a new inference-focused processor during the event, potentially incorporating intellectual property acquired through the company’s recent purchase of AI startup Groq.

Nvidia spent approximately $17 billion in December to acquire Groq, a firm known for developing hardware designed to perform AI inference tasks quickly and at lower cost.

Huang recently stated that Groq’s technology will be integrated into Nvidia’s widely used CUDA computing platform, which powers many of the company’s AI applications.

Analysts at Mizuho noted that Groq’s hardware has demonstrated around 100 times lower latency at roughly 20% of the cost compared with Nvidia’s traditional GPUs when performing inference tasks.

Expanding the AI Hardware Ecosystem

Beyond processors, Nvidia has also invested roughly **$2 billion in optical technology firms Lumentum and Coherent. These companies develop laser-based communication systems that use light beams to transfer data rapidly between chips.

Although these optical interconnect technologies could significantly improve the speed of AI data centers, they are still produced at a much smaller scale than Nvidia’s core GPU products.

According to analysts at BofA Securities, Nvidia is expected to announce a broader expansion of its AI hardware portfolio at the conference.

Investors will also watch for updates on potential supply challenges, including shortages of AI-related memory chips. Analysts are also paying attention to the impact of the ongoing Iran conflict on global supply chains, energy costs, and the continued expansion of AI data centers worldwide.